Common code to parse and validate various type of descriptors. More...

Macros | |

| #define | MIN_ANNOTATION A_PURPOSE |

| #define | MAX_ANNOTATION A_UNKNOWN_ |

| #define | ALLOC_ZERO(sz) memarea_alloc_zero(area,sz) |

| #define | ALLOC(sz) memarea_alloc(area,sz) |

| #define | STRDUP(str) memarea_strdup(area,str) |

| #define | STRNDUP(str, n) memarea_strndup(area,(str),(n)) |

| #define | RET_ERR(msg) |

| #define | MAX_ARGS 512 |

| #define | MAX_UNPARSED_OBJECT_SIZE (128*1024) |

| #define | MAX_LINE_LENGTH (128*1024) |

Functions | |

| void | token_clear (directory_token_t *tok) |

| int | tokenize_string (memarea_t *area, const char *start, const char *end, smartlist_t *out, token_rule_t *table, int flags) |

| directory_token_t * | get_next_token (memarea_t *area, const char **s, const char *eos, token_rule_t *table) |

| directory_token_t * | find_by_keyword_ (smartlist_t *s, directory_keyword keyword, const char *keyword_as_string) |

| directory_token_t * | find_opt_by_keyword (const smartlist_t *s, directory_keyword keyword) |

| smartlist_t * | find_all_by_keyword (const smartlist_t *s, directory_keyword k) |

Detailed Description

Common code to parse and validate various type of descriptors.

Macro Definition Documentation

◆ RET_ERR

| #define RET_ERR | ( | msg | ) |

Function Documentation

◆ find_all_by_keyword()

| smartlist_t* find_all_by_keyword | ( | const smartlist_t * | s, |

| directory_keyword | k | ||

| ) |

If there are any directory_token_t entries in s whose keyword is k, return a newly allocated smartlist_t containing all such entries, in the same order in which they occur in s. Otherwise return NULL.

◆ find_by_keyword_()

| directory_token_t* find_by_keyword_ | ( | smartlist_t * | s, |

| directory_keyword | keyword, | ||

| const char * | keyword_as_string | ||

| ) |

Find the first token in s whose keyword is keyword; fail with an assert if no such keyword is found.

◆ find_opt_by_keyword()

| directory_token_t* find_opt_by_keyword | ( | const smartlist_t * | s, |

| directory_keyword | keyword | ||

| ) |

Find the first token in s whose keyword is keyword; return NULL if no such keyword is found.

◆ get_next_token()

| directory_token_t* get_next_token | ( | memarea_t * | area, |

| const char ** | s, | ||

| const char * | eos, | ||

| token_rule_t * | table | ||

| ) |

Helper function: read the next token from *s, advance *s to the end of the token, and return the parsed token. Parse *s according to the list of tokens in table.

Reject any object at least this big; it is probably an overflow, an attack, a bug, or some other nonsense.

Reject any line at least this big; it is probably an overflow, an attack, a bug, or some other nonsense.

◆ token_clear()

| void token_clear | ( | directory_token_t * | tok | ) |

Free all resources allocated for tok

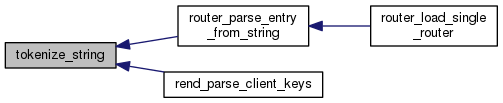

◆ tokenize_string()

| int tokenize_string | ( | memarea_t * | area, |

| const char * | start, | ||

| const char * | end, | ||

| smartlist_t * | out, | ||

| token_rule_t * | table, | ||

| int | flags | ||

| ) |

Read all tokens from a string between start and end, and add them to out. Parse according to the token rules in table. Caller must free tokens in out. If end is NULL, use the entire string.

1.8.13

1.8.13